Function Creep in Biometrics: Concerns & Real Examples

Categories

Biometric technologies—such as fingerprint technology, facial recognition, and palm vein scanning—are now ubiquitous, offering seamless security and user convenience. Yet, a troubling phenomenon known as function creep is casting a shadow over these advancements. Function creep happens when biometric data, gathered for a defined purpose, is later used for unrelated activities without clear permission or disclosure.

Given the unchangeable nature of biometric identifiers like your retina or voice pattern, this practice raises profound ethical, privacy, and security issues. This article delves into the dynamics of function creep, its implications, and four documented cases that underscore its real-world consequences.

What Is Function Creep in Biometrics?

Function creep in biometrics refers to the unauthorized or undisclosed expansion of biometric data applications beyond their initial intent. For instance, a voiceprint collected for banking authentication might later be repurposed for targeted advertising or government tracking without the user’s knowledge. This gradual broadening of data use often begins with practical motivations—organizations aim to leverage existing datasets for efficiency or innovation.

However, such shifts can erode user confidence and expose individuals to risks, especially since biometric data, unlike passwords, cannot be reset. Recognizing the mechanics of function creep is essential to mitigating its dangers.

Why Does Function Creeping Raise Serious Concerns?

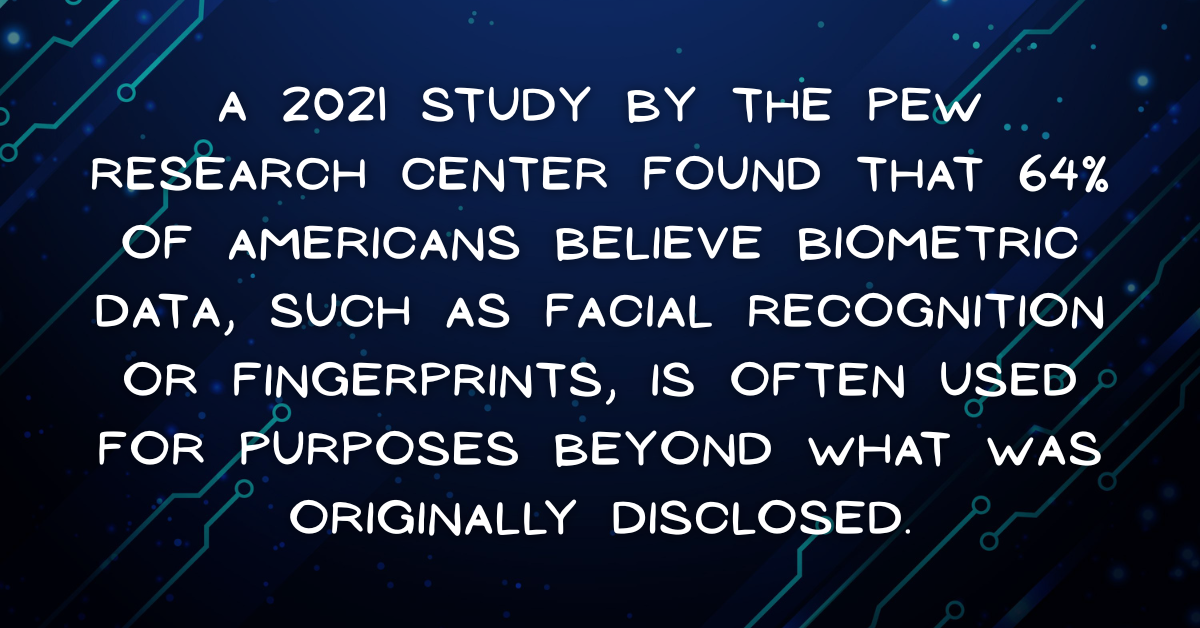

The unchecked expansion of biometric data usage introduces a range of threats, from personal privacy breaches to systemic societal harm. Below, we explore the core issues, blending narrative context with concise points to emphasize the stakes.

Erosion of Privacy

When biometric data is repurposed without consent, individuals lose authority over their personal identifiers. A fingerprint scan submitted for travel verification, for example, could be shared with marketing firms or surveillance agencies, leaving users unaware and powerless. This violation of privacy undermines faith in both technology providers and public institutions.

Security Risks

Expanding the scope of biometric data use makes databases more attractive to cybercriminals. A single breach in a system storing multi-purpose biometric records could compromise data across sectors—healthcare, finance, or law enforcement—resulting in severe consequences like fraud or identity theft.

Ethical Issues

Function creep often sidesteps ethical standards such as informed consent and necessity. Using biometric data for unforeseen purposes, like behavioral profiling or monitoring, can exploit vulnerable communities, particularly in regions with limited legal protections.

Why Is Regulation Lagging?

Many nations lack robust frameworks to govern biometric data, enabling function creep to proliferate. Without stringent laws, organizations face minimal repercussions for repurposing data, leaving users exposed. Key concerns include:

- Loss of Autonomy: Individuals are often unaware their biometric data is being used for new purposes, stripping them of agency.

- Risk of Discrimination: Expanded data use can fuel biased profiling, disproportionately affecting marginalized groups.

- Erosion of Trust: Untransparent data practices diminish public confidence in biometric systems and their operators.

Real-World Examples of Function Creeping in Biometrics?

To highlight the tangible effects of function creep, we examine four notable cases where biometric systems were extended beyond their original purposes, detailing the fallout and responses.

Clearview AI and Law Enforcement

Original Purpose

Clearview AI built a facial recognition database by harvesting billions of images from social media, initially marketed for niche security purposes.

Creep Mechanism

The company supplied this database to law enforcement agencies globally for suspect identification, far exceeding the original scope of data collection.

Impact

This unauthorized use triggered global backlash, as social media users were oblivious that their images were fueling police surveillance, raising privacy concerns.

Response

Clearview AI faced lawsuits and fines in several countries for unethical data practices, spurring demands for tighter facial recognition laws.

Aadhaar in India

Original Purpose

India’s Aadhaar system, the largest biometric ID program worldwide, collects iris and fingerprint data to provide unique IDs for accessing public services like subsidies.

Creep Mechanism

Private entities and government bodies accessed Aadhaar data for unrelated tasks, such as authenticating telecom subscribers or loan applicants, often without explicit consent.

Impact

Reports of data breaches and unauthorized access heightened fears of mass surveillance and privacy violations within this vast biometric repository.

Response

Public outcry and legal battles have driven calls for stronger data protection laws in India, though gaps in enforcement remain.

7-Eleven’s Retail Surveillance in Australia

Original Purpose

In 2021, 7-Eleven Australia deployed facial recognition in stores to enhance security and deter shoplifting.

Creep Mechanism

The biometric data was also used to create customer demographic profiles for marketing campaigns, without informing or obtaining consent from shoppers.

Impact

The discovery of this practice sparked public anger and regulatory inquiries, as customers felt betrayed by the undisclosed data use.

Response

Australia’s privacy authority launched an investigation, and 7-Eleven faced pressure to overhaul its biometric practices, underscoring the need for transparency.

Google’s Biometric Data Practices

Original Purpose

Google collects biometric data, such as facial scans and voiceprints, via services like Google Assistant and Photos for user authentication and feature enhancement.

Creep Mechanism

In 2025, Texas Attorney General Ken Paxton alleged Google used this data to train AI models and build user profiles for purposes beyond the original intent, without proper consent.

Impact

These claims fueled concerns about function creep in consumer technology, as users were unaware their biometric data was feeding broader AI or advertising initiatives.

Response

The lawsuit prompted calls for more transparent data policies, though global biometric data standards remain fragmented.

How Can We Mitigate Function Creeping?

Combating function creep demands a multifaceted approach to align technological innovation with ethical accountability. Below, we outline actionable strategies to address its risks.

Strengthening Regulation

Governments must establish precise laws to restrict biometric data use, enforce transparency, and impose penalties for violations. The EU’s GDPR provides a foundation, but tailored biometric regulations are needed to curb function creep effectively.

Enhancing User Consent

Organizations should adopt clear, user-centric consent processes, ensuring individuals understand data purposes and can opt out. Intuitive interfaces that clarify data uses before collection can empower users to make informed decisions.

Implementing Technical Safeguards

To limit unauthorized data repurposing, organizations can employ:

- Data Minimization: Collect only essential biometric data for the intended function.

- Encryption and Anonymization: Protect data to prevent misuse, even in breaches.

- Purpose Limitation: Use siloed systems to restrict data access to its original purpose.

Raising Public Awareness

Informing users about function creep equips them to hold organizations accountable. Media campaigns and educational initiatives can highlight risks and encourage scrutiny of biometric practices.

These measures face hurdles, such as industry pushback and the slow pace of global regulatory harmonization. Yet, they are critical to safeguarding users as biometric adoption grows.

Why Must We Stay Vigilant?

Function creep in biometrics poses a significant threat to privacy, security, and trust in an era where these technologies are increasingly pervasive. The cases of Clearview AI, Aadhaar, 7-Eleven, and Google demonstrate how biometric data, collected for specific purposes, can be repurposed in ways that undermine user autonomy and expose them to risks. By bolstering regulations, improving consent mechanisms, deploying technical protections, and fostering public awareness, we can address these challenges. As biometric systems advance, remaining proactive ensures their benefits—enhanced security and convenience—are not outweighed by the erosion of our fundamental rights.